- Someone's invented a glass jar with a screw-on lid on both ends. Makes sense: By the time you reach the bottom of the peanut butter, most of the oil is gone and what's left is as hard as a rock. Or, alternate lids and gradually work your way toward the middle. If you keep alternating which end is up, the oil won't be as likely to collect on either end and the peanut butter may stay softer until it's all gone.

- Bruce Baker directs us to a Webring of homebrew CPUs. No VLSI. No LSI. Maybe TTL chips. Or discrete transistors. Or relays. I wire-wrapped a COSMAC machine with 2K of RAM back in 1977, and I thought it was a job. Wow.

- As an individual with a “special relationship” to calculus (and a special fondness for filk) I damned near bust a gut watching this. The Slashdot commenters (who are interesting all by themselves) need to get a life, or at very least a sense of humor. Most of them weren't even alive when Disco was first-run, and so I suspect the comedy shoots right by them.

- Epson has been demonstrating a proof-of-concept model of an A4-sized e-ink display with a mind-boggling resolution of 3104 X 4128 pixels, in monochrome. 385 DPI is more than enough for monochrome graphics (though gray-level depth is not stated) and within a few years, a full-sized letter/A4 ebook reader will not only be possible but inevitable.

- From Jan Westerlink comes a pointer to a wonderful gallery of home-made kites, including one I especially like, especially in light of yesterday's entry: A 4-cell tetrahedral made by rubber-banding 24 bamboo shish-kebob skewers together. Scroll down to pictures 24 and 25. Beautifully done, if a little prickly: If that thing dives toward you, duck!

Odd Lots

The D-Stix Kite Flies Again!

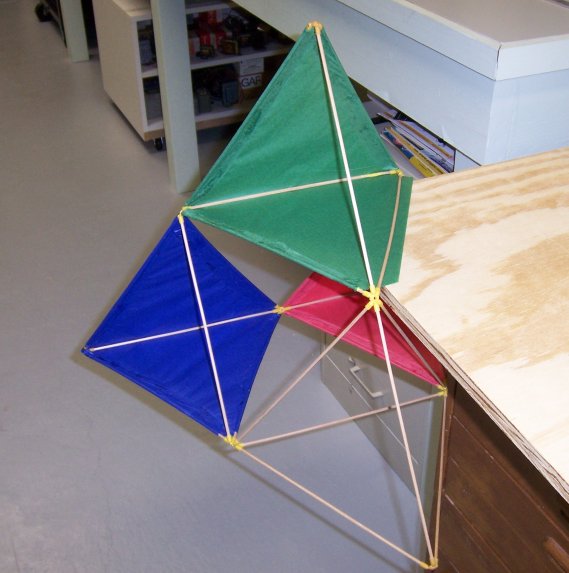

As we concluded our first date back on July 31, 1969, I somewhat apprehensively asked Carol if she would go out flying a kite with me on the following Saturday. I was building a tetrahedral kite out of my D-Stix set, and although my intuition was that this was not the way to impress girls, I gave it a shot, and she accepted. And so it was that we piled into my mom's '65 Biscayne and took my D-Stix tetra out to the huge Forest Preserve field at Irving Park Road and Cumberland.

As we concluded our first date back on July 31, 1969, I somewhat apprehensively asked Carol if she would go out flying a kite with me on the following Saturday. I was building a tetrahedral kite out of my D-Stix set, and although my intuition was that this was not the way to impress girls, I gave it a shot, and she accepted. And so it was that we piled into my mom's '65 Biscayne and took my D-Stix tetra out to the huge Forest Preserve field at Irving Park Road and Cumberland.

The kite didn't fly well, if I recall correctly (and in truth, most of what I remember about that Saturday afternoon was Carol) but we both had a great time. An hour or so in, the kite smashed into the ground and broke a couple of sticks, but I salvaged the yellow connector pieces—and when I recently pulled down the butter dish that the D-Stix connectors had been in since who knew when, begorrah, they were still in there, including one with some kite string still tied through the hole.

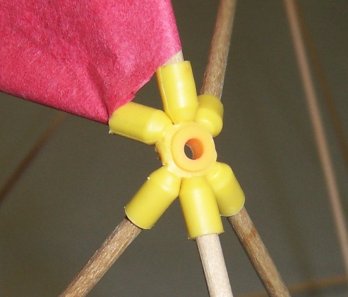

It was a natural. I took the same damned D-Stix pieces, bought some 1/8″ dowels, and I made us another tetrahedral kite. At some point I will create a Web page describing its construction in detail, but I'll just insert a few photos here. A typical joint is at right. The yellow connector originally had eight “ears,” but I snipped two off with a dykes to make the requisite six. (The four outer vertices were six-bangers from which I snipped three.) The paper was ordinary Hobby Lobby artsencrafts tissue, which I glued with Elmer's glue. Mucilage would be better—or at least more historically accurate—but they don't sell that at Hobby Lobby anymore.

It was a natural. I took the same damned D-Stix pieces, bought some 1/8″ dowels, and I made us another tetrahedral kite. At some point I will create a Web page describing its construction in detail, but I'll just insert a few photos here. A typical joint is at right. The yellow connector originally had eight “ears,” but I snipped two off with a dykes to make the requisite six. (The four outer vertices were six-bangers from which I snipped three.) The paper was ordinary Hobby Lobby artsencrafts tissue, which I glued with Elmer's glue. Mucilage would be better—or at least more historically accurate—but they don't sell that at Hobby Lobby anymore.

Building the kite didn't take much doing. I assembled the D-Stix frame, cut out some conjoined equilateral triangles of tissue, and glued the tissue to the frame. There were a couple of tricky glue joints, but nothing that a protruding corner of a chunk of plywood didn't finesse. All in all, it took maybe an hour.

And it flew. Sorta. Carol and I got it into the air down at the park along Highway 115, but the wind was strong and erratic and I had to hang some tail on it to keep it aimed skyward. It had a tendency to lean left, and after a few minutes of tearing around the great blue sky like a puppy suddenly released from its kennel, it did The Dive, and mashed itself against the grass just like its previous incarnation had, almost 39 years earlier. Two sticks popped out of their sockets, and the tissue ripped in two places, but it's nothing a clever geek can't fix.

It was beautiful. (And weird.) Just like Carol (and me.) We laughed, and laid back in the grass, and reflected that life can be good on a brisk Saturday, with a kite and some string and a willingness to let all the rest of it just blow away for awhile.

The Risks of Quirky English

As I've mentioned here a time or two, I've been gradually recasting my 1993 book Borland Pascal 7 From Square One for the current release of FreePascal. It's turned out to be a larger project than I had expected for a number of reasons, some of them humbling (I was not as good a writer in 1984 as I am today) and some completely unexpected. The one that came out of left field stems from the fact that Pascal isn't used in the US that much anymore. Most of the audience for the new book is in Continental Europe, and while most of them understand English, they understand correct, formal, university-taught English.

Not slangy, quirky, down-home, Jeff Duntemann feet-up-on-the-cracker-barrel English.

This became clear some time back when I posted the first few chapters for FreePascal users to look at. I got a few emails with detailed critique (for which I am extremely grateful) and there was a certain amount of puzzlement about some of the language. A few of the things that puzzled my European friends were not a surprise:

- QBit stretches and climbs on my chest, wagging furiously as though to say, “Hey, guy, tempus is fugiting. Shake it!”

- Ya gotta have a plan.

- …and write the plan in German, to boot.

- …cats are pets, not hors d'oerves on the hoof.

But some were. The expression “to run errands” is not universally understood there. Nor is the word “shack.” (I changed it to “shed.”) I want very much for the book to be accessible to those who are using Pascal the most, and that's a new kind of challenge for me: Writing plain English without resorting to clever coinages and Americanisms.

Alas, I'm not always aware of it when I'm using Americanisms. (I should find a book for English-speakers traveling here, just as there are books on British English for those of us who visit the UK.) There are other problems: Europeans are not intuitive with Fahrenheit temperatures, any more than we're intuitive with Celsius here. I mentioned in the book that it hit 123° in Scottsdale once in the summer of 1996, and although my European friends know that that's hot, when I translate it to Celsius—50°—they gasp. We were gasping too—Keith and I had to shut the Coriolis offices down because the air conditioners were losing the race. Solution: Put temps in Celsius. Americans know damned well how hot it is in Scottsdale. (As I left in 2002, it seemed like most of them had already moved there.)

It's a two-edged sword. I like writing the way I talk, and for those who haven't met me, well, I talk the way I write. It's easy. On the other hand, having worked my way through the first hundred-odd pages of the new book, straightening out my language quirks, I find that it now reads very well. It doesn't sound quite as much like me, but that's OK. The idea is to keep Pascal alive, wherever and however it is to be done. Writing for the world—and not strictly for us American barbarians—is a useful skill and good discipline. If I stick with Pascal and Delphi, which I have every intention of doing, you're likely to see more of it in the future.

Odd Lots

- Most people know that the New Age Millerites believe that the world is going to end on December 21, 2012; if that's news to you, here's a nice overview of this deliciously deranged topic, courtesy Frank Glover.

- Several people online, in an almost offhand fashion, have indicated that Vista's knucklehead UAC feature is training people to click “Allow” automatically, no matter what it's asking about. That may be the single greatest design error in Vista, and could over time render Vista as insecure as anything that came before it, and certainly less secure than just working in an LUA under XP.

- A reader has asked me to post an entry on all magazines to which I have subscribed and re-upped at least once, but no longer subscribe to. That would be an interesting collection, and a long entry, but I'll try and get to it soon. One of that group that I might still read if I had enough time is First Things.

- I had a tin toy robot when I was six or seven, and it seems like most of my friends had one or two. So it was a Boomer kid-culture phenomenon, and one I don't see much about these days. Here's a photo gallery from Wired with some nice shots. Most of my early childhood nightmares were about robots and mummies, which suggests that my two subconscious fears (at least according to one amateur Jungian friend of mine from twenty-odd years ago) were of death and berserk machinery. I'm not so sure; that was what most of the cheap horror/sf flicks I saw on Channel 7 were about at that time.

- Another interesting article/discussion on the Fermi Paradox. Me? I think we really are alone. I don't know why just yet. If I had to guess, I'd say that it's because imagination as a mental mechanism is rare. But I've been thinking about this for thirty years and keep coming back to that conclusion.

- Lordy, I remember these from catalogs back in the early 60s. Why do they suggest a filk: Looking Through the Eyes of Badminton! (Thanks to Bishop Sam'l Bassett for the pointer.)

Magazine Meanderings

We brought home two weeks' worth of mail this afternoon, and in the pile were the latest issues of all the print magazines I currently subscribe to: QST, Nuts & Volts, The Atlantic, and Wired. Every month I read them—or major portionsof them—and every month I fret for the future of the magazine business, in which I played with great success for fifteen years (1985-2000.)

QST isn't doing too badly. It looks pretty much the way it looked twenty years ago, and it fulfills its editorial mission better than any magazine I've seen in recent times. The amateur radio demographic isn't doing well, as our average age is now up in the high 50s somewhere, but the editorial people know their readers, the ad people know their advertisers, and somehow they make it work. I just wish they'd publish a review—or simply an announcement!—of my Carl & Jerry reprints.

And I continue to be amazed at how well Nuts and Volts has grasped what Tim O'Reilly has brilliantly nailed as the Maker Psychology: People who build stuff because they love to build stuff, whether it actually turns out to be useful or not. It's the last real big-time magazine about electronics, and I bought a lifetime subscription in 1980 for $5! I just wish they'd publish a review—or simply an announcement!—of my Carl & Jerry reprints. (Is there an echo in here?)

I'm not as sanguine about The Atlantic, and haven't been for five or six years now. Still, every time my sub runs out, I re-up, having been wowed by an article or two right in the nick of time. They used to publish thought-provoking articles about things I hadn't heard about before, or didn't understand, or both. A couple of issues ago, they published a cover story about…Britney Spears! The readership apparently sent away (from one of those wonderfully eccentric little 1/12 page ads in the back of the mag, doubtless) for authentic Transylvanian torches and pitchforks and began marching on the mag's offices on New Hampshire Avenue in DC. Me, I'll be contrarian here and say that Britney didn't bother me too much. Maybe she was an experiment. Maybe the editorial staff wanted to prove to their bean counters that slutty, washed-up pop singers are not the keys to the kingdom; if so, they succeeded in spades. No, my big gripe with The Atlantic is that they became obsessed with political personalities a few years back, and now it's Obama or one damned Clinton or another on the cover almost all the time. I wouldn't mind articles about political ideas so much, but no—it's all about how desperate Hilary is getting, and how Obama will learn how to walk on water by his inauguration next January. (Hint: Pray for a winter cold enough to freeze the Potomac.) I was ready to let it lapse back in January, and then they published Lori Gottlieb's brilliantly ascerbic little spitting-into-the-evolutionary-wind commentary called “Marry Him!” I re-upped. And this month, mirabile dictu! Their cover story is a reasonable layman's overview of threats to the Earth from asteroids and comets. There's the inescapable praise-for-Obama piece and a peculiar backhanded tribute to G. W. Bush, both of which I could have done without, but there was also an anguished piece by an adjunct professor teaching English to night school students telling us that not everyone has what it takes to get a college degree. We'll see who wins come next January when I have to write another check, but my editor's intuition detects a back-office struggle between editors who think we like to read about ideas and editors who think we like to read about, um, our national mental illness. Hey, guys, John-John Kennedy himself couldn't make it work. Whatthehell makes you think you can?

I'm not as sanguine about The Atlantic, and haven't been for five or six years now. Still, every time my sub runs out, I re-up, having been wowed by an article or two right in the nick of time. They used to publish thought-provoking articles about things I hadn't heard about before, or didn't understand, or both. A couple of issues ago, they published a cover story about…Britney Spears! The readership apparently sent away (from one of those wonderfully eccentric little 1/12 page ads in the back of the mag, doubtless) for authentic Transylvanian torches and pitchforks and began marching on the mag's offices on New Hampshire Avenue in DC. Me, I'll be contrarian here and say that Britney didn't bother me too much. Maybe she was an experiment. Maybe the editorial staff wanted to prove to their bean counters that slutty, washed-up pop singers are not the keys to the kingdom; if so, they succeeded in spades. No, my big gripe with The Atlantic is that they became obsessed with political personalities a few years back, and now it's Obama or one damned Clinton or another on the cover almost all the time. I wouldn't mind articles about political ideas so much, but no—it's all about how desperate Hilary is getting, and how Obama will learn how to walk on water by his inauguration next January. (Hint: Pray for a winter cold enough to freeze the Potomac.) I was ready to let it lapse back in January, and then they published Lori Gottlieb's brilliantly ascerbic little spitting-into-the-evolutionary-wind commentary called “Marry Him!” I re-upped. And this month, mirabile dictu! Their cover story is a reasonable layman's overview of threats to the Earth from asteroids and comets. There's the inescapable praise-for-Obama piece and a peculiar backhanded tribute to G. W. Bush, both of which I could have done without, but there was also an anguished piece by an adjunct professor teaching English to night school students telling us that not everyone has what it takes to get a college degree. We'll see who wins come next January when I have to write another check, but my editor's intuition detects a back-office struggle between editors who think we like to read about ideas and editors who think we like to read about, um, our national mental illness. Hey, guys, John-John Kennedy himself couldn't make it work. Whatthehell makes you think you can?

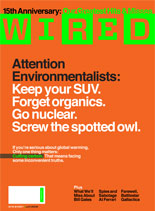

And then there's Wired. Like the trademark alternating color bars on their spine, I subscribe and lapse, subscribe, and lapse. The colors and the page layouts still give me headaches, but to ensure that I'm still young old (rather than old old) I have to keep in touch. And they have their moments: The current issue's cover text is absolutely, unreservedly brilliant: “Attention Environmentalists: Keep your SUV. Forget organics. Go nuclear. Screw the spotted owl.” I wanted to cheer. And then I went right for the cover story, to receive the worst impression that they had gotten the idea for the cover but then chickened out when trying to create the article. A few hundred disjointed words without much in the line of facts qualifies as a rant but hardly an idea piece, and the tiny nuggets of information they tossed in simply made me nuts to find the rest of the story somewhere. In the meantime, what the current issue tells me is that in some quarters, at least, our national mental illness is not politics but ADD. There's almost nothing in the whole issue that's more than a few hundred words long. Wired has for some time been approaching what I might call “magazine theater”: Giving the reader the impression that they're reading a magazine. It's currently paid for, but I think the bar on my spine is about to change colors again.

And then there's Wired. Like the trademark alternating color bars on their spine, I subscribe and lapse, subscribe, and lapse. The colors and the page layouts still give me headaches, but to ensure that I'm still young old (rather than old old) I have to keep in touch. And they have their moments: The current issue's cover text is absolutely, unreservedly brilliant: “Attention Environmentalists: Keep your SUV. Forget organics. Go nuclear. Screw the spotted owl.” I wanted to cheer. And then I went right for the cover story, to receive the worst impression that they had gotten the idea for the cover but then chickened out when trying to create the article. A few hundred disjointed words without much in the line of facts qualifies as a rant but hardly an idea piece, and the tiny nuggets of information they tossed in simply made me nuts to find the rest of the story somewhere. In the meantime, what the current issue tells me is that in some quarters, at least, our national mental illness is not politics but ADD. There's almost nothing in the whole issue that's more than a few hundred words long. Wired has for some time been approaching what I might call “magazine theater”: Giving the reader the impression that they're reading a magazine. It's currently paid for, but I think the bar on my spine is about to change colors again.

Still, consider the fate of Sky and Telescope, which I dropped in February after subscribing for over twenty years, because I just wasn't reading it. I took Harper's for a year back in the late 80s. Now I riffle through it in airport newsstands to make sure I'm not missing something. (I'm not.) And don't get me started on Scientific American…

I love magazines. I guess I'm just fussy. But you knew that.

A Gunk Issue With Eraser 5.7

I'm over at my sister-in-law Kathy's house, taking a look at her Dell SX270 computer, which I installed here last summer. She asked me to look at it because it had suddenly gotten sluggish, and I was prepared to find viruses or spyware. Instead I found a 40 GB hard drive that was 98% full, when the last time I was here it was less than 50% full. That was mighty suspicious, and I ran TreeSizeFree to tell me where all that file space had gone. It had gone into a single folder under C: called ~ERAFSWD.TMP, which was hogging a staggering 21 GB of disk space in many hundreds of very large files.

The ~ERAFSWD.TMP folder, as it turns out, is created every time the free Eraser file shredder app begins an erasing run, and is deleted when Eraser finishes. A week or so ago, the machine's video driver crashed for reasons unclear, and had the bad karma to crash when Eraser was doing its default daily scheduled run erasing unused disk space. So Eraser had never released the temp space it allocated while it was running.

The solution is simple: Exit Eraser (it's a tray app) and delete ~ERAFSWD.TMP. Then (to make it less likely to happen again) remove the scheduled erase run, which is set up by default when Eraser is installed. Eraser is most useful when emptying the Recycle Bin, and installs a Recycle Bin context menu item for that purpose. Unless you work with a great deal of confidential information, daily scheduled free space erasing is massive overkill. I do a free space run on demand every few months.

It was my own damned fault, as I had installed Eraser on this machine last summer without removing the daily scheduled run. Sometimes even a master degunker generates massive gunk. Mea culpa.

UltraDefrag

As part of my research for Degunking Essentials (basically a condensation and updating of my three Degunking titles) I happened upon a free disk defrag utility that's worth trying: UltraDefrag. It's open source and hosted on Sourceforge, meaning it's safe—at least if that's where you get it from.

The defrag utility built into Windows 2000 and XP is a crippled version of Diskeeper. It defrags but does not compact—that is, it does not consolidate the defragmented files toward the low end of the hard drive, and sometimes leaves a large number of contiguous files scattered around on the drive. The commercial version of Diskeeper is quite good and I bought it years ago, but UltraDefrag seems to do everything Diskeeper does, and it's free. UltraDefrag compacts files, separating files and free space. As an option it can also defragment the page file at boot time, something I've never done. (I don't perceive any increase in responsiveness having done it, though. I doubt it has to be done very often.) The UI is less whizzy, but results are what you're looking for, not flashy graphics. UltraDefrag is small, simple, reasonably fast, and easy to use. Get it here. Highly recommended.

Odd Lots

- Sorry for the recent quietude here; the weekend was a whirlwind, and it took all of yesterday just to catch my breath.

- Scott Kurtz' PvP webcomic celebrated its first ten years by showing us that Brent Sienna actually has eyes. Wow.

- Allan Heim sent me a pointer to a nice article on the Fermi Paradox that expresses a position I have been drifting toward for most of my life: That we are probably alone in the universe, perhaps not only as intelligent, tool-building beings but also as living things, period. The author makes a case that being alone in the universe would be very good news, but not for the reason you might think. Read it.

- Borland is apparently selling their CodeGear division (which develops and supports Delphi) to Embarcadero Technologies, a database tools company. This was not unexpected, and to be honest with you, I can't tell if it's a good idea or not. One of Delphi's most serious problems is that it got so good after five or six years that most people stopped upgrading; I'm amazed at how many people are still using Delphi 6. The cost of the product was also an issue—there is no ~$100 starter edition—and the Turbo Delphi Explorer experiment demonstrated how important the ability to install components was. An amazing number of people wrote to me to say that they downloaded the free product, installed it, fooled with it for a week or so, and then went back to Delphi 6.

- From Mike Sergent comes a pointer to a NYT piece indicating that most people do not have the training to discern the level of subtlety in wine flavor that they claim to, and that a lot of it may exist mostly in our heads anyway. This is not news (to me, at least) but it's nice to see it going mainstream.

- Michael Covington posted a fascinating graph of changes in home prices from Q4 2006 to Q4 2007, suggesting that the “housing bubble” has not been evenly distributed. The coasts have suffered, as have most major cities and trendy places like Colorado's Front Range, but flyover places like Nebraska and Wyoming have posted solid increases in that time. In addition to that, sharp differences by state suggest that state-level housing and banking policies have more to do with housing cost changes than most people are willing to admit.

- Also from Michael is a graph demonstrating that the US economy is not as much of a disaster as Big Media has been hammering on. (I won't invite all the usual hate mail by explaining in detail why this is, as it's pretty obvious if you think about it.)

- The other day I found myself thinking something remarkable (for me): I would rather buy a Mac and run my essential Windows apps in a Parallels window (or in a compatibility box like Crossover) than move to Vista.

Meeting Juliana Leigh Roper

Bill & Gretchen returned home from Madison yesterday with their new baby, Juliana Leigh. They were a little ragged from the stress of the adventure and spending almost a week in a hotel room, but the payoff was difficult to calculate: the little girl sleeping on Gretchen's shoulder. Mission accomplished: Julie is home.

Gretchen dropped her in my lap and I held her for a little while, Gretchen having made sure that her diaper was correctly applied and (as best she could tell) tight. Julie looked around for awhile and squirmed a little, but mostly she wanted to fall asleep. Like her sister Katie before her, she is a very placid and un-fussy baby. I heard her cry some when Gretchen changed her diaper a little later, but apart from that she took it easy on Gretchen's shoulder. She lay quietly in her magic stroller (magic in the way it folds down to nothing and slides behind the seats in their van) while we had supper at Sweet Baby Ray's, even with all the fuss that the waitresses were making over her. Being six days old, she still has the ruddiness of complexion that one expects of newborns, and the pale blue eyes that most infants have before their pigment develops. Bill has blue eyes. Gretchen's, like mine, are very brown. Julie's could still go either way.

Nothing more to offer this morning than that. I'm working on Degunking Essentials as I have for the last few days, and will rejoin Carol later today. Tomorrow we launch south to Champaign to witness our younger nephew Matt graduate from the U of I, and with no crisp idea of my free time or connectivity, it's hard to know when I'll post again, but don't despair if you don't see anything before Monday.

Odd Lots

- Here at the condo this morning, I can't bring up squat on the Web because everybody's out there trying to figure out who won the Democratic primaries last night. So I did an absolutely unheard of thing: I went down to the White Hen, got some of their great coffee, and picked up a newspaper. What a notion.

- I'm hearing more and more people say that Wi-Fi doesn't work as well as it used to, which is weird because microwave physics hasn't changed recently. But…look at how many APs Windows can see from wherever you are. From my kitchen table here, NetStumbler sensed twelve APs…and walking around inside our dinky little condo picked up four more. Three of the strongest signals were on default Channel 6—and five out of sixteen were cleverly named “linksys.” I don't think it's the physics, folks.

- After Meetup.com went all-paid (and highly paid) I investigated an alternative called Gatheroo, which later (in response to another damfool lawsuit from somebody) became Zanby. The site's been redesigned and is worth a look if you want to start a meatspace social network where you live. There are both free and paid levels of participation, and it's certainly not as expensive as Meetup.

- Matthew Reed (and lots of others after him) sent me pointers to articles about the recent implementation of memristors, which are a species of passive electronic component postulated in 1971 but not actually implemented until HP researchers made some earlier this year. Whether this interests you varies directly by the strength of your passion for electronics, and whereas I understand the concept now, my head is still spinning trying to figure out what it implies. Everybody's talking about better computer memory, sure…but what could this do in simple analog circuits?

- Jim Strickland sent me a pointer to a YouTube video about a flame triode amplifier/oscillator lashup, and guys, you gotta see this. It's basically a vacuum tube without either vacuum or tube: When the electrodes get hot, it starts amplifying. I don't fully understand the physics yet, but this would be one fantastic high school science fair project. The question arose in our local group as to whether this could be considered steampunkish, and I'm not sure. People in the steampunk era had no problems generating reasonably hard vacuum and blowing glass envelopes. What they had problems with was understanding electrons. Nonetheless, with a big enough flame and some honkin' batteries, you could have done some impressive things back in 1888.

- Global Cooling adherents have been sending me pointers to Watts Up With That, and Icecaps.us. Fascinating reading, including numerous facets of the climate change discussion that you won't see in Big Media. F'rinstance: Weather monitoring installations that were built sixty or seventy years ago out in the leafy countryside have recently become surrounded by new development, buildings, pavement, etc., and as a result are now in the middle of heat islands. What might that do to long-term temperature data? Hmmmm….