Human memory is peculiarly unreliable–but verifiably unreliable. The science is there, and it’s pretty good science, too. In his excellent book, On Being Certain, neurologist Robert A. Burton describes the Challenger study: Within a day of the Challlenger disaster, a psychologist asked 106 of his students to write down precisely where they were when the explosion occurred, how they heard about it, and how they felt at that moment. Two and a half years later (hardly a lifetime, though significant for the young) the students were interviewed, and asked to recount the details of what they had written down and given to their professor. Fewer than ten percent of the students recalled all of the details correctly as they had written them. A quarter of the students’ memories were significantly different, and over half had some major differences with what they had recorded at the time.

Thirty months–and an event that stands in many people’s memories (including my own) as one of the most striking events of their lifetime. Intriguingly, even when confronted with their original notes written the day after the event, many students with conflicting memories insisted that their current memories were correct. As one said, “That’s my handwriting, but that’s not what happened.”

Egad.

I’ve been struck in recent years with an increasing number of things that happened that I don’t remember, things I remember incorrectly, and (disturbingly) things that I remember vividly that simply didn’t happen at all. I introduced this topic with a simple example: A friend of mine found a college-era manuscript of a short story I wrote that I just don’t remember writing. Getting old, I guess. The bitchy part is that it’s a pretty good story, and it was completely outside my usual aliens-and-starships turf. Somehow I would have thought it would make a more vivid impression on me.

But we forget things. Odder are things we remember vividly that we in fact remember wrong. Forty-three years ago, when I was in eighth grade, I remember talking to a girl in my class and stumbling on the fact that her father had died. Forty years later, I ran into her again at our grade-school reunion, and it came out that it was her mother and not her father who had died. The original conversation was painful, and I remember painful things very well–you’d think I would have remembered it more accurately. In a different conversation with the same girl, I asked her what high school she would be attending that fall. I remember her indicating one Catholic girls’ school, but in fact (again, verified forty years later) she had attended another. She had never even considered the school that I remember her saying, because it was a fair ways off and the other school was within walking distance.

But I remember both conversations to this day, with the sort of clarity one would expect of a bright if nerdy kid attempting to make conversation with a girl he was a little sweet on. It took considerable courage to talk to her at all, and those are the things of which solid memories are made.

Except when they’re not, I guess.

It was that particular incident that started me looking critically at my own memories, especially those that could be verified somehow. I found a lot of little things that didn’t add up, including a few “flashbulb” memories (as psychologists call them) that one would expect would be vivid and indelible forever. The most recent one is something I chased down just the other day: I vividly remember the first time I kissed Carol–who wouldn’t?–and I remember that it was after we started school in the fall, which would be at least five or six weeks after we met at the end of July. Well, on the back of her 3 X 5 card in my teen-years telephone index box (which still exists among piles of oddments I’m amazed that I still have) is the note “kissed 8/16/69.” That was only two weeks after our most fateful meeting, and school was still another two weeks off. (Remember when school started after Labor Day?)

If I don’t remember that accurately, well, what hope for the rest of it? What kind of life did I actually live?

Stand by: The weirdest part is yet to come.

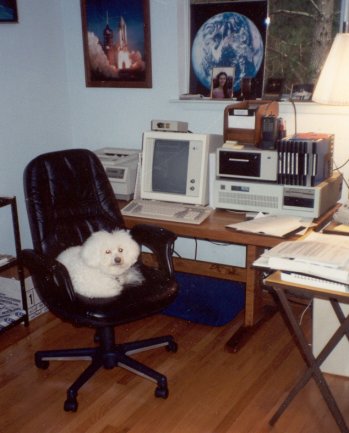

The IBM PC gave us 24 X 80 displays, but that was never enough. Text windowing systems like TopView seemed insane to me, and back in April 1989, when I was doing the “Structured Programming” column in DDJ, I wrote and published an “anti-windowing system” that treated the crippled 24 X 80 display as a scrollable window into a much larger character grid. Full-page text displays eventually arrived: The MDS Genius 80-character X 66-line monochrome portrait-mode text display (left) sat on my desk from 1985 through 1992, when Windows 3.1 finally made text screens irrelevant. (Lack of Windows drivers for the display soon forced MDS into liquidation.) It wasn’t until I bought a 21″ Samsung 213T display in 2005 and started running at 1600 X 1200 that I first recall thinking, “Maybe this is big enough.”

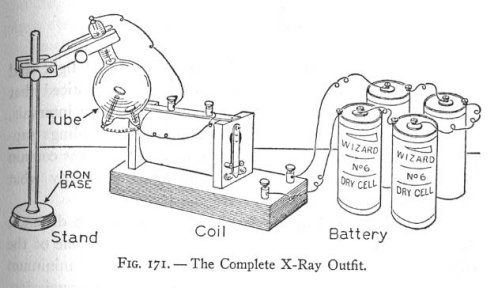

The IBM PC gave us 24 X 80 displays, but that was never enough. Text windowing systems like TopView seemed insane to me, and back in April 1989, when I was doing the “Structured Programming” column in DDJ, I wrote and published an “anti-windowing system” that treated the crippled 24 X 80 display as a scrollable window into a much larger character grid. Full-page text displays eventually arrived: The MDS Genius 80-character X 66-line monochrome portrait-mode text display (left) sat on my desk from 1985 through 1992, when Windows 3.1 finally made text screens irrelevant. (Lack of Windows drivers for the display soon forced MDS into liquidation.) It wasn’t until I bought a 21″ Samsung 213T display in 2005 and started running at 1600 X 1200 that I first recall thinking, “Maybe this is big enough.” It took a few minutes of flipping through some books in my workshop, but I eventually found what I remembered: That one of my “boys” books contained a description of a tabletop X-ray setup. The book in question is The Boy Electrician, the first volume of many from Alfred Morgan, who later wrote The Boys' First Book of Radio and Electronics and its three sequels, all of which loomed large in my tinkersome youth. The Boy Electrician was originally published in 1913 and is now in the public domain. The 1913 edition has been reprinted by Lindsay Books and I consider it worth having. There was a significant revision in 1943 that added chapters on radio and a few other things, and as best I can tell, the copyright on that edition was not renewed and it too is now in the public domain. A 40 MB PDF of the 1943 edition is

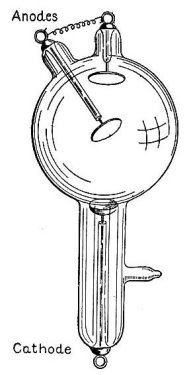

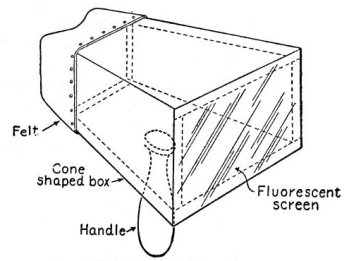

It took a few minutes of flipping through some books in my workshop, but I eventually found what I remembered: That one of my “boys” books contained a description of a tabletop X-ray setup. The book in question is The Boy Electrician, the first volume of many from Alfred Morgan, who later wrote The Boys' First Book of Radio and Electronics and its three sequels, all of which loomed large in my tinkersome youth. The Boy Electrician was originally published in 1913 and is now in the public domain. The 1913 edition has been reprinted by Lindsay Books and I consider it worth having. There was a significant revision in 1943 that added chapters on radio and a few other things, and as best I can tell, the copyright on that edition was not renewed and it too is now in the public domain. A 40 MB PDF of the 1943 edition is  Morgan explains that you can either view images directly with a fluoroscope or expose ordinary photographic plates by placing an object to be X-rayed between the tube and the plate and leaving it there for fifteen minutes. This includes things like purses, mice, or…your hand. If you have the money, he also explains that a hand-held fluoroscope may be constructed by simply coating a sheet of white paper with crystals of platinum barium cyanide. It looks like the fluoroscope screen is used by basically staring at the X-ray tube with the object to be X-rayed between the tube and the paper screen.

Morgan explains that you can either view images directly with a fluoroscope or expose ordinary photographic plates by placing an object to be X-rayed between the tube and the plate and leaving it there for fifteen minutes. This includes things like purses, mice, or…your hand. If you have the money, he also explains that a hand-held fluoroscope may be constructed by simply coating a sheet of white paper with crystals of platinum barium cyanide. It looks like the fluoroscope screen is used by basically staring at the X-ray tube with the object to be X-rayed between the tube and the paper screen. It would be interesting to know just how many boys bought the tube and tried to make it work; though given that $4.50 in 1913 would be about $100 today, I doubt it was many. Nor do I know how toxic platinum barium cyanide is, but I'm guessing a little more than iron filings. (On the other hand, my 1962 chemistry set contained a little bottle of sodium ferrocyanide, which sounds much worse than it actually is.)

It would be interesting to know just how many boys bought the tube and tried to make it work; though given that $4.50 in 1913 would be about $100 today, I doubt it was many. Nor do I know how toxic platinum barium cyanide is, but I'm guessing a little more than iron filings. (On the other hand, my 1962 chemistry set contained a little bottle of sodium ferrocyanide, which sounds much worse than it actually is.)